By Bruno Quinart

Unboxing an embeddable analytical database.

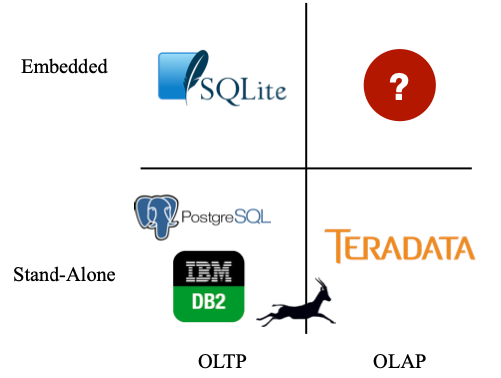

DuckDB is a recent addition in the analytical database world. And it takes an interesting approach: it wants to be the SQLite for analytics.

DuckDB was developed by Mark Raasveldt and Hannes Mühleisen, two database researchers at the Centrum Wiskunde & Informatica (CWI) in Amsterdam, the Dutch National Research Institute for Mathematics and Computer Science.

CWI is not just any research institute. For a few decades now, the team has been pushing the analytical database forward. MonetDB showed the benefits of columnar storage for analytics and Vectorwise introduced vectorized query execution.

There is even a direct link to the current stars of analytical databases. Marcin Żukowski, the main author of Vectorwise, became a co-founder of Snowflake and Databricks in 2017 opened an office in Amsterdam to hire a number of experts from the Vectorwise and MonetDB projects.

Next to that, a certain Guido Van Rossum was at CWI when he created a programming language called Python which might ring a bell.

A fertile ground for database innovation!

The motivation for building DuckDB is the observation by the authors that data scientists are mostly working with dataframe libraries like Pandas and dplyr and not using a proper database build on many years of fundamental database research. Using a database means new dependencies to connect, authentication to manage, transfers of data in and out, etc. These frictions need to be addressed for a database to be considered. So they went back to the easiest database to use: SQLite. They took the concept of an in process database, but build it for analytics.

But what do we mean exactly with analyticals? As the DuckDB website explains: such workloads are characterized by complex, relatively long-running queries that process significant portions of the stored dataset, for example aggregations over entire tables or joins between several large tables. Changes to the data are expected to be rather large-scale as well, with several rows being appended, or large portions of tables being changed or added at the same time.

DuckDB follows the SQLite approach but does not share much underneath. DuckDB builds on a number of open source predecessors. For example it uses the PostgreSQL parser, the initial design was inspired by Peloton (a research driven database project from Carnegie Mellon) and the initial Multi-Version Concurrency Control was based on Hyper (again a research driven database from Technische Universität Munchen, purchased by Tableau).

The key component is the columnar-vectorized query execution engine. Which means that during query processing work is done with data in columns and the construction of actual rows is postponed till the very end of the query execution. This allows more efficient processing by the CPU of large amounts of data. Combined with a number of other techniques and features like parallel execution, a state-of-the-art optimizer and SQL support for arbitrary and nested correlated subqueries, window functions and complex types, makes DuckDB indeed a good fit for analytical workloads.

DuckDB also has it own columnar, single file storage format. This can be easy for some embedded scenarios. However I personally don't believe this would be used that often for the data science scenario.

The salaries of the authors are paid from taxes and they see it as their duty to society to make the results of their work freely available to anyone. Therefore DuckDB is released under the very permissive MIT License.

There is also a non-profit DuckDB Foundation that collects funds for the further development and holds much of the intellectual property. Next to that there is now also DuckDB Labs, co-founded by the two authors, that provides some services around the product.

The aspect I most appreciate is the focus on making it all lightweight and simple to operate. There is no external dependencies and the whole code is available as two files: a header and an implementation file, a so-called "amalgamation".

The permissive license and the lightweightness means that going forward DuckDB could appear in some unexpected places.

One use case we recently worked with was for a need to be able to execute arbitrary complex analysis on parquet data on an object store but with strong availability requirements. In this case, the total data for individual requests was always reasonable. Including DuckDB as library into the existing microservices setup means we can rely on the existing mechanisms to keep the service available and scale in function of load. Obtaining something similar with for example Presto would be more complicated and require the introduction of a number of new components.

Finally, with regards to the initial use-case: single node data-science. It works as announced: simple and fast. Note that next to explicit SQL, there is also a programmatic API in both Python and R that allows you to construct the transformation step by step. However, especially in Python, there is now already a number of projects trying to improve pandas: Dask, Polars, Clickhouse to name just a few. Arrow also to some extent. However, having to think about when to use these different engines and juggling between them also adds friction.

I am looking forward to how Substrait could help removing this friction. It aims to provide a standardised intermediate query language (lower level than SQL) to connect frontend user interfaces like SQL or data frame libraries with backend analytical computing engines. It is linked to the Arrow ecosystem.

Something like Ibis or Fugue could become the front and DuckDB the backend engine.