By Tim Leers

What is MLOps?

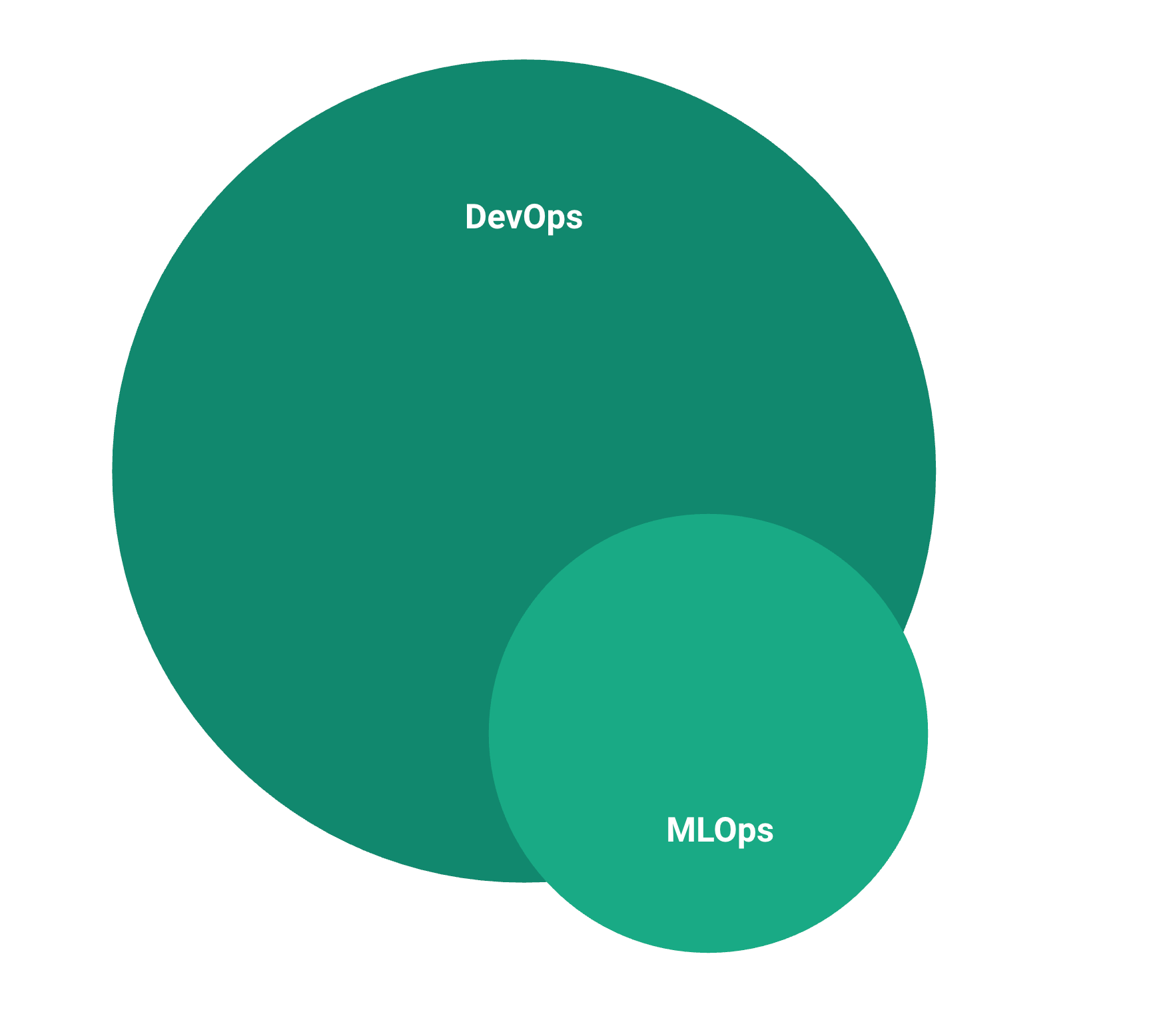

Machine Learning Operations (MLOps) can be treated as a subset of challenges in software Development Operations (DevOps), with the latter encompassing software engineering best practices and principles used to streamline the process of delivering software in companies.

MLOps concentrates on the unique challenges brought about by the development of ML-powered projects and products, particularly due to the nascent state of ML, artifact management and reproducibility issues, unique infrastructure requirements, a perpetual need for experimentation & monitoring, and the control for data domain instability.

MLOps engineers are usually occupied with enhancing ML model deployment and value creation efficiency and impact, within and across teams, focusing on the processes that typically experience tension when involving a feedback loop between developer and operation teams. In essence, MLOps aims to minimize friction across the project lifecycle between these teams to reduce the time to value and maximize the impact of team effort.

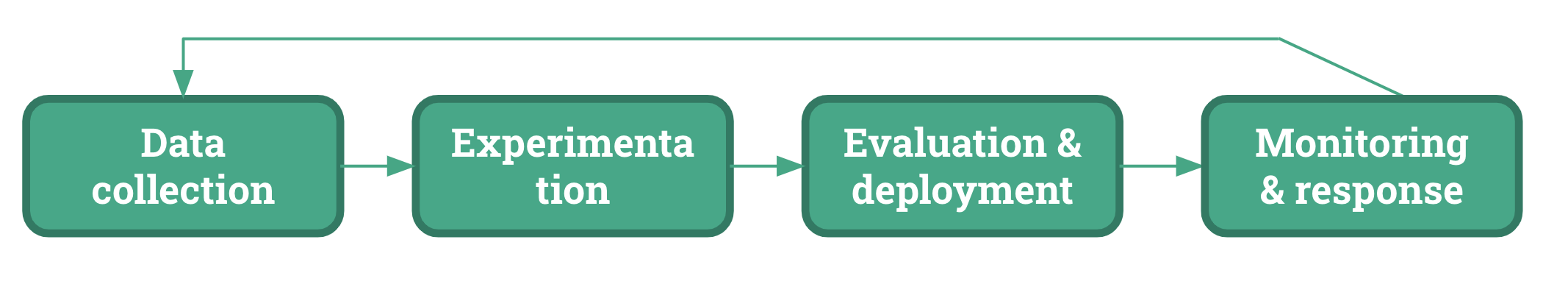

The machine learning engineer workflow, going from initial development to eventual deployment and integration into the value chain, generally consists of 4 core tasks:

Figure 1: ML Engineer workflows. Figure adapted from Shankar et al. (2020)

An open problem in today's solution ecosystem is the standardization of this workflow. Different problem domains, data modalities, and industry applications may necessitate deviations from, additions to, or varying emphasis on these tasks.

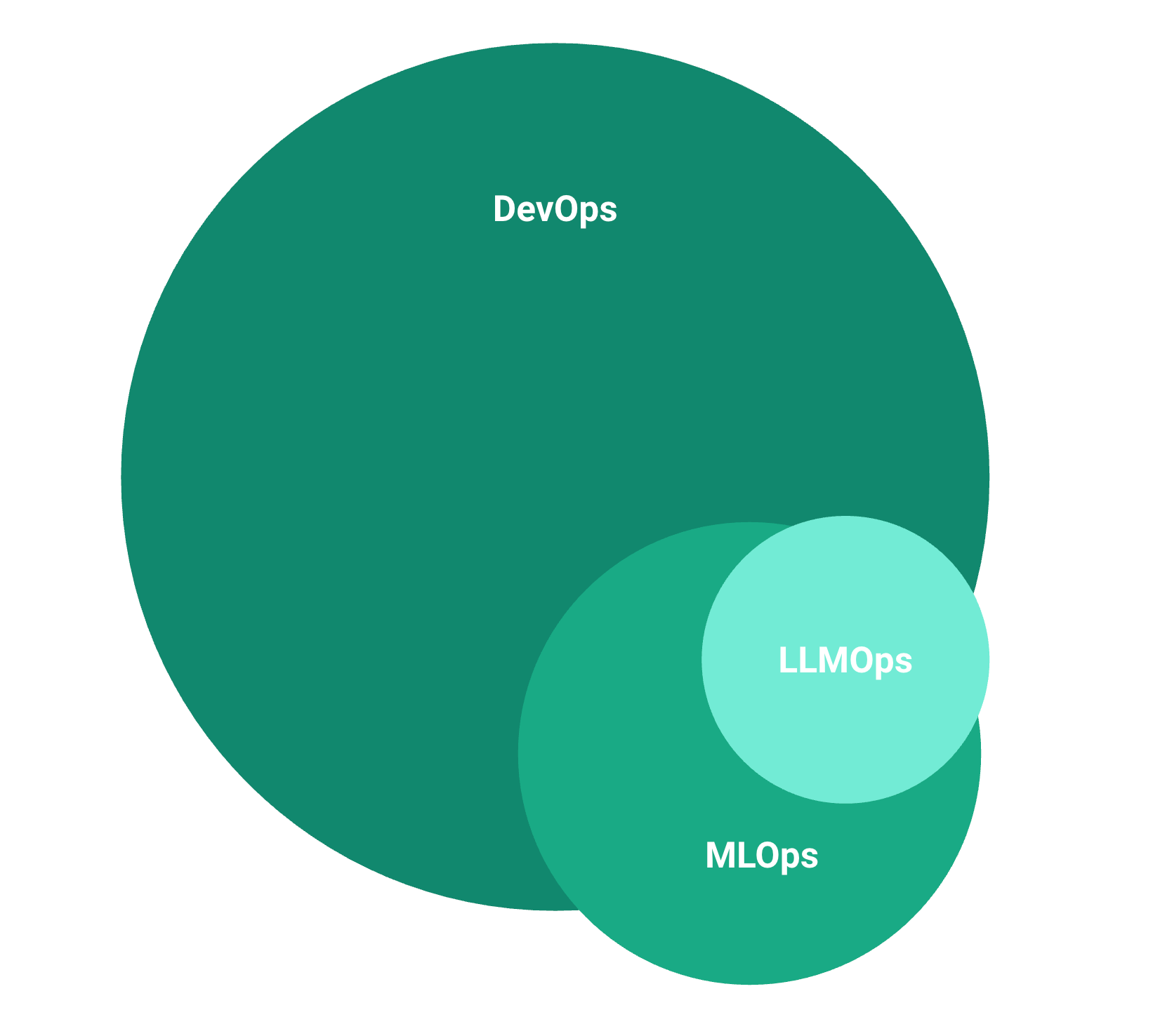

The impact of the large-language model paradigm shift on MLOps

LLMOps is situated in the same realm as MLOps, but brings new dimensions to similar tasks. In a way, LLMOps is just another flavour of the workflow in Figure 1, but due to significant deviations from task conventions, including training, deployment & maintenance, LLMs demand a different conceptualization and discussion.

In the transition from MLOps to LLMOps, an analysis of differences at the task-level highlights the need for adaptations and expansions of traditional ML tasks. These adjustments will be crucial for effectively operationalizing LLMs within the enterprise realm.

| Task | MLOps (from Shankar et al. 2020) | LLMOps |

|---|---|---|

| Data Collection and Labeling | Sourcing new data, wrangling data, cleaning data, and data labeling (outsourced or in-house). | Requires larger scale data collection and emphasizes data diversity and representativeness. May need automated or semi-automated labeling techniques, such as pre-trained models for data annotation, active learning, or weak supervision methods. |

| Feature Engineering and Model Experimentation | Improving ML performance through data-driven or model-driven experiments, such as creating new features or changing model architecture. | Feature engineering becomes less relevant due to LLMs' ability to learn effective feature representations from raw data, for the near future shifting towards prompt design and fine-tuning. Model experimentation continues to play a crucial role but will return to the earlier days of the data science evolution in the short-term, centered around getting consistently performing models for a specific use case, requiring quick iteration speeds to create value. Long-term, unclear where we are heading in this space due to the rapid advancements & shifts in LLM capabilities. |

| Model Evaluation and Deployment | Computing metrics (e.g., accuracy) over a validation dataset. Deployment includes staging, A/B testing, and keeping records for rollbacks. | Evaluation and deployment are more nuanced, requiring a broader set of metrics and techniques to assess fairness, robustness, and interpretability, not just accuracy. These could include "golden test sets", which are human-validated feedback on narrow questions/tasks. Deployment needs robust tools for managing the training data, training process, versioning of models, and possibly switching between different models depending on the use case. Drift detection systems and measures to handle adversarial attacks or misaligned inputs are also crucial. The complexity of these tasks may necessitate new roles or specialized skills. |

| ML Pipeline Monitoring and Response | Tracking live metrics, investigating prediction quality, patching the model with non-ML heuristics, and adding failures to the evaluation set. | Involves tracking model performance across multiple tasks, languages, and domains using tools like "watcher models", which are other LLMs or ML models that automatically evaluate output for real-time monitoring. Monitoring for potential biases, ethical issues, or unintended consequences. Responding to issues may involve adjusting the prompt, fine-tuning the model on new examples or edge cases, or even retraining the model |

This table will change significantly in the near future as our knowledge and capabilities in LLMOps become more refined.