By Tim Leers

As we alluded to in our trends post, the number of researchers, developers and companies that focus on eXplainable AI (XAI) is growing faster each year.

There are three main drivers behind this evolution.

1. XAI is a significant competitive advantage in a market where only a few players harness its capabilities.

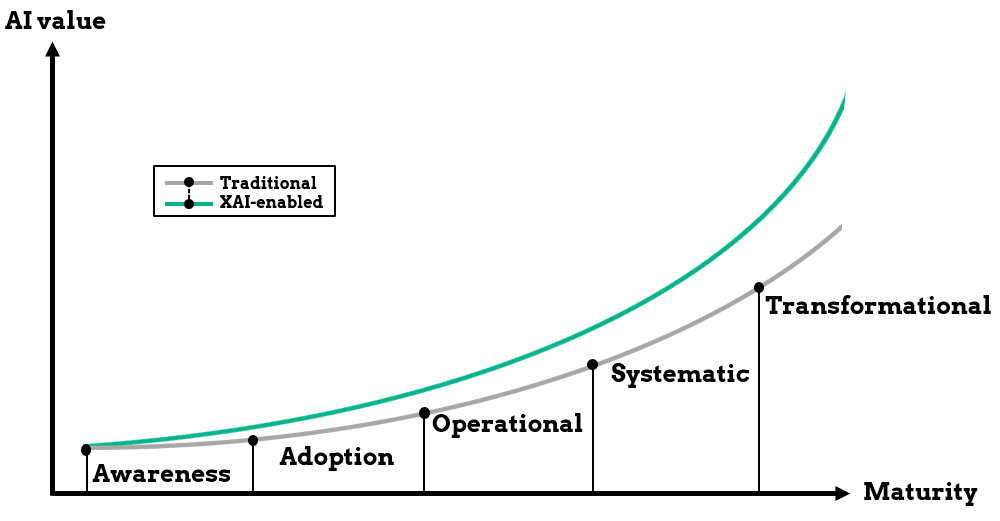

Maximally leveraging AI solutions requires stakeholder trust at every level, which can be attained through XAI. Moreover, XAI functions as a catalyst for a company's journey up the AI maturity curve and provides added value for the same level of maturity.

For example, XAI can facilitate advanced insights into existing AI solutions, allowing companies to derive more value without extensive investment. It can also enable new applications, such as human-in-the-loop approaches, where AI and humans collaborate to tackle challenges where neither can provide a satisfactory solution by themselves. So, what is stopping companies from adopting XAI?

- In corporations, extensive coordination & investment may be necessary to integrate AI-driven decisions into business processes, which can be exacerbated by internal divisions taking up different positions on the AI maturity curve altogether.

- In general, companies have not yet sufficiently progressed up the AI maturity curve, and do not have a clear path to integrating XAI in their AI solutions and business processes. However, increasingly industry-ready solutions are making that integration possible.

2. The software ecosystem supporting AI applications has advanced to the point where XAI is among the largest remaining obstacles to AI adoption and integration.

Despite the increasing maturity of AI as a technology, we're still far away from making many AI solutions understandable to a satisfactory level in a straightforward manner. For example, how do we make sure an AI model is performing as intended over time, and doesn't negatively impact the bottom line, nor any of its end-users?

While there is a growing body of work dedicated to tackling such problems, it often takes a mix of domain experts & developers to interpret and translate the insights from contemporary XAI to non-technical, understandable explanations. While there are many solutions popping up that reduce the need for either domain experts or developers altogether, they do not always provide explainability at the level a stakeholder or regulator desires, which takes us to our next point.

3. Regulators are turning their attention to the exponential risk imposed by the AI-driven transformation of industries.

AI adoption is on the rise, and consequently impacting larger segments of society. In turn, European regulators are mandating interpretability and transparency to offset the potentially harmful effects on its citizens.

In 2016, EU significantly impacted the landscape of data-driven solutions with GDPR, propelling industry to shape their policies and technologies to adhere to these regulations. Potentially stricter enforcement of GDPR's "right-to-explanation" will require additional investment into existing XAI technologies and a system to provide insight to users and how they are impacted by automated AI decision-making.

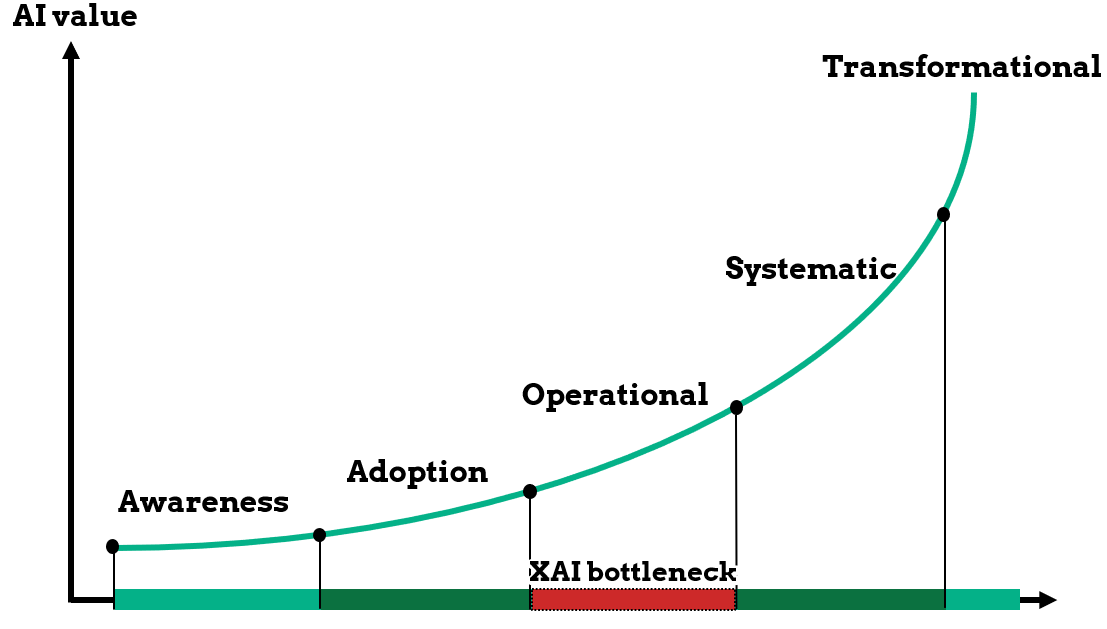

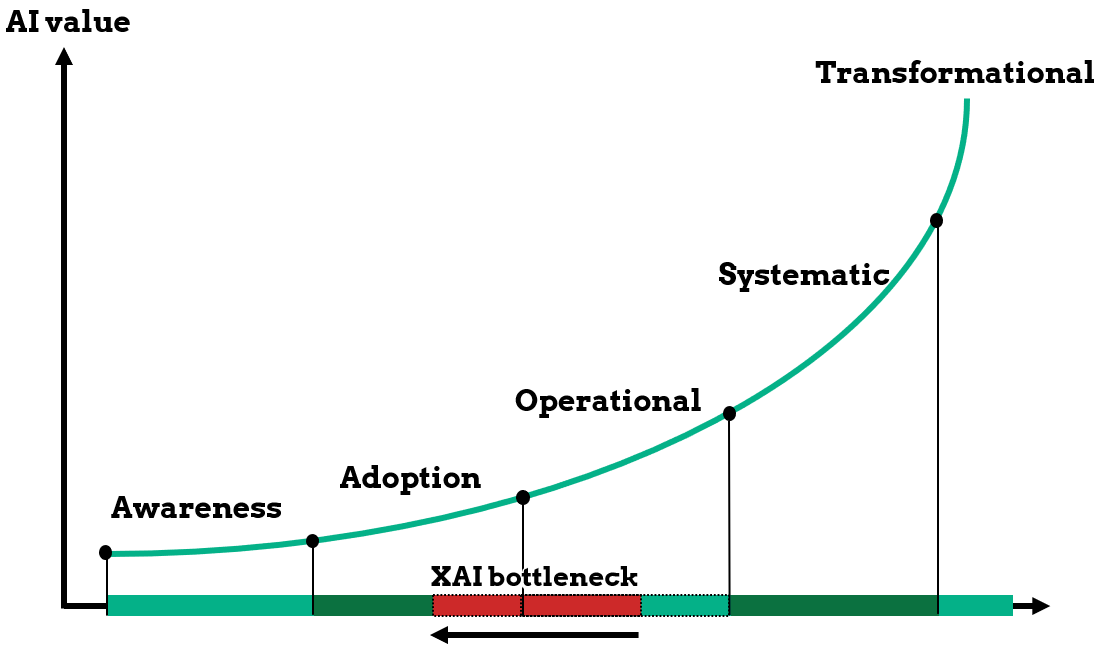

In 2021, European legislators announced they wish to further restrict applications of AI through the “Artificial Intelligence Act” which will drive the need for AI insight, transparency and governance. Especially for companies that have yet to integrate AI into their business processes (moving from the adoption phase to the operational phase) XAI may become a severe bottleneck.

Moreover, the new European AI Act will require entirely novel XAI methods to be developed for some industries, especially those operating in high-risk contexts, pushing the XAI bottleneck even to the early adoption phases.

In conclusion, XAI will continue to grow as a target of investment in the near future. For those companies already leveraging AI solutions, early investment can ensure the continuity of their services offering. For others, the XAI bottleneck may pose a significant barrier of entry. At dataroots, we are actively working towards a more favorable status quo with established XAI standards for particular business contexts and risk levels, accelerating companies in their journey up the AI maturity curve.

Our data strategy and machine learning experts are actively developing XAI-as-a-service so your company can effortlessly clear the XAI barrier. Contact us if you're interested to learn more for your business case.

At dataroots research, we're actively monitoring the latest trends in research and industry to develop industry-ready tooling and integrate them into our existing services offering, so you can benefit from the best-in-class XAI for your business goals.

For a more technical deep dive into trends in XAI from the top AI conference NeurIPS in December 2021, take a look at our latest post.

Are you a student passionate about XAI? Our first XAI intern has just started and will help us in XAI research & development. Interested in an internship? Take a look at our open opportunities: https://careers.dataroots.io/