By GUPPI

Joining the bandwagon

Recently, text-to-image models have been sprouting all around the internet. It started with the release of DALL-E 2, a model created by OpenAI.

DALL·E 2 is a new AI system that can create realistic images and art from a description in natural language

Many other similar models were released quickly after DALL-E. It went so fast that I'm not even sure which models were released when. The most famous ones are probably DALL-E, obviously, Midjourney, a model developed by Midjourney, and Stable diffusion, an open-source model from Stability.ai. If you now google ‘text-to-image’, you will find a plethora of tools that offer this service, with different pricing mechanisms. Some are fully open-source and allow you to generate your images locally, some are subscription-based, and some are based on quotas.

Whatever the pricing formula, these models are revolutionising and democratising imagery, making image generation and editing very easy and accessible to anyone. It is very difficult to predict how this will evolve and what will be the future of these types of models. Yet we already see a lot of interests and activity happening.

I wanted to try it myself and make my own opinion about these models. So I embarked on the journey of creating a logo with DALL-E. Why DALL-E is a very arbitrary choice. I subscribed to the waiting list and was lucky enough to get an account for a free trial of

DALL-E. I must say that the interface is very user friendly and easy to use. So, I chose the path of least resistance.

My journey creating a logo

Short story long, with a couple friends, we created a data meetup group in Charleroi. Since Charleroi has a mining history (coal mining), we named our meetup group Data Mineurs (Data miners), as a nice combination of the two worlds. I needed a logo to visualise the spirit of our group and its philosophy.

I started using the prompt and asking for some visuals to DALL-E.

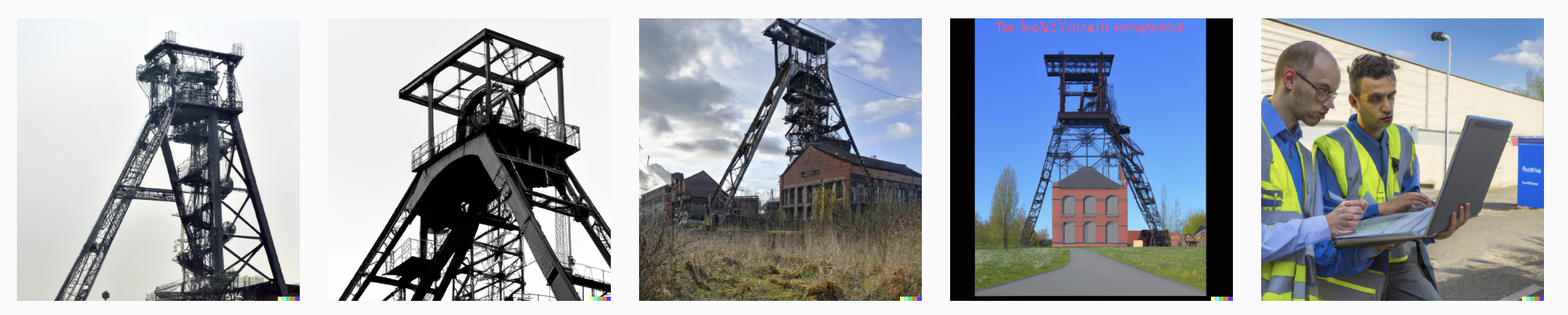

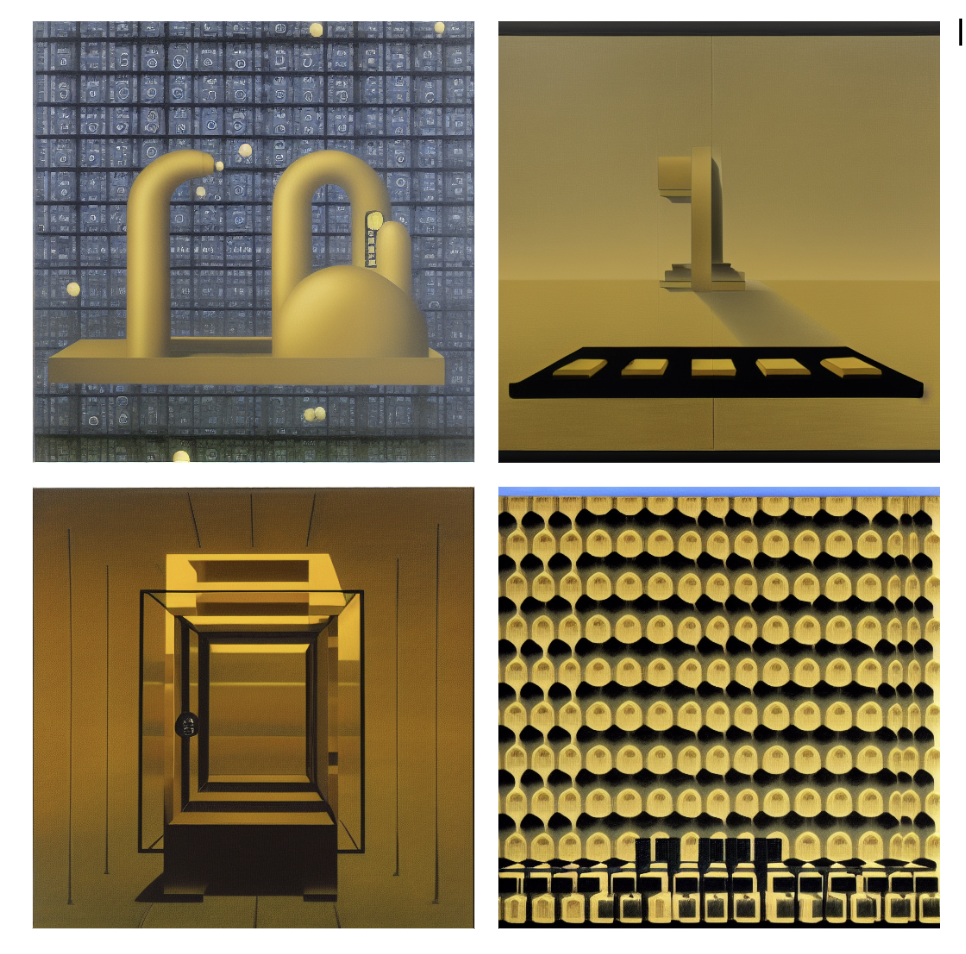

For example Data miners Charleroi, returned the following images:

The prompt is definitely too vague. There is too much room for interpretation and results are not satisfying. Of course, art is very subjective so it might be pleasing to some and we must concede that results are very impressive and very photo realistic.

I started playing around a bit, changing the wording and being more explicit in my requests. A very cool feature of the tool is that you can customise your images based on a style, an author or even a famous painter. Indeed, the model has been developed using a lot of real pictures, photos and paintings so you can a style, a painter name to your prompt to refine your request.

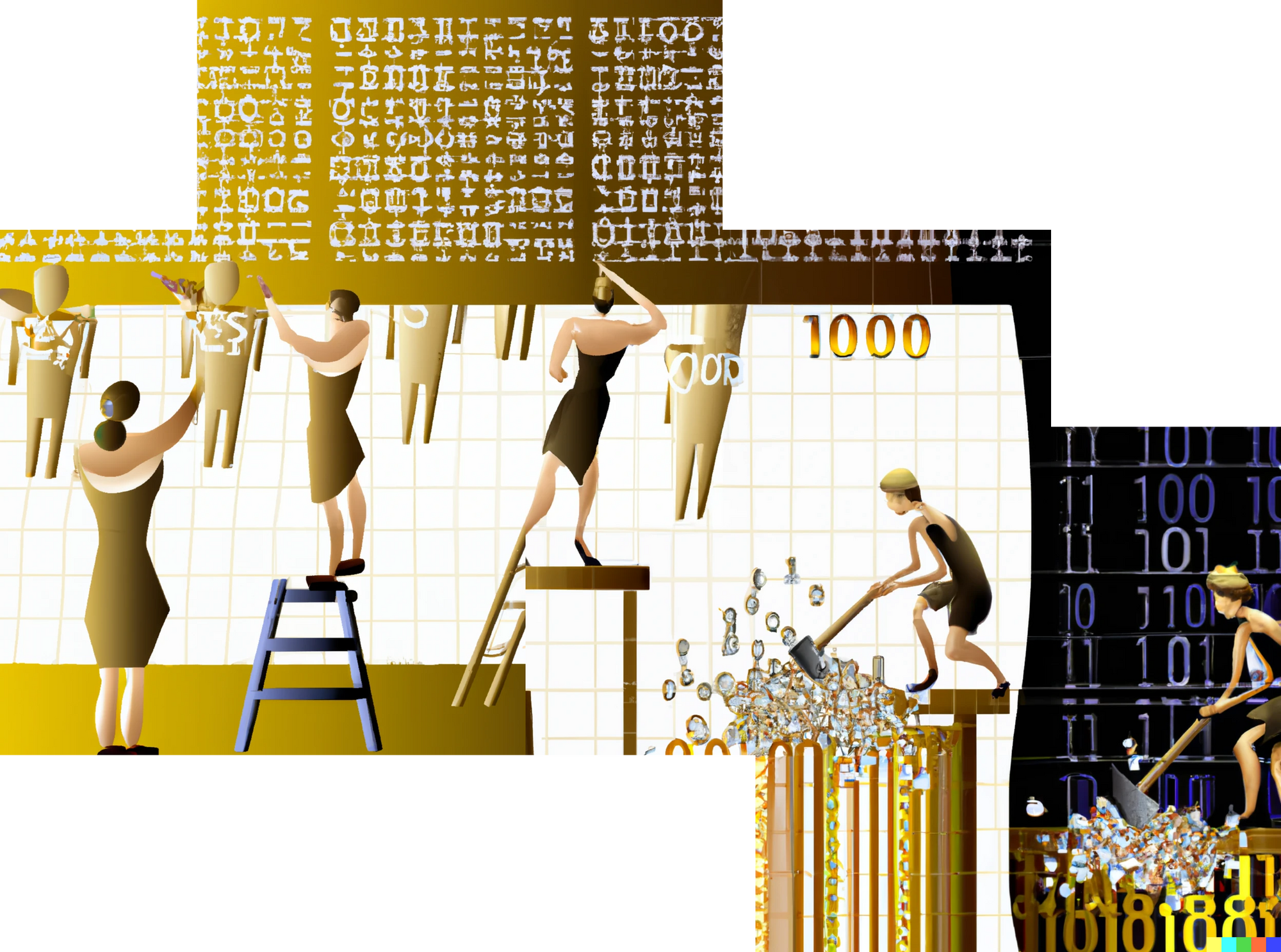

With the prompt Miners mining binary golden data coal in Charleroi, as a vector art, you already get a picture looking like a logo.

These models are not deterministic so if you enter twice the exact same sentence, you'll not get the exact same results. (Note that you can get deterministic results if you use the same words and the same seed).

Another feature of the tool is to create variations of a picture:

The out-painting feature is also very cool. It allows you to complete the initial picture, on the sides, top, bottom, leading to this kind of visuals.

As you can see, there are quite a lot of awesome features, and you can spend a lot of time creating nice visuals with DALL-E. It really is fascinating how these pictures are generated simply from a few words.

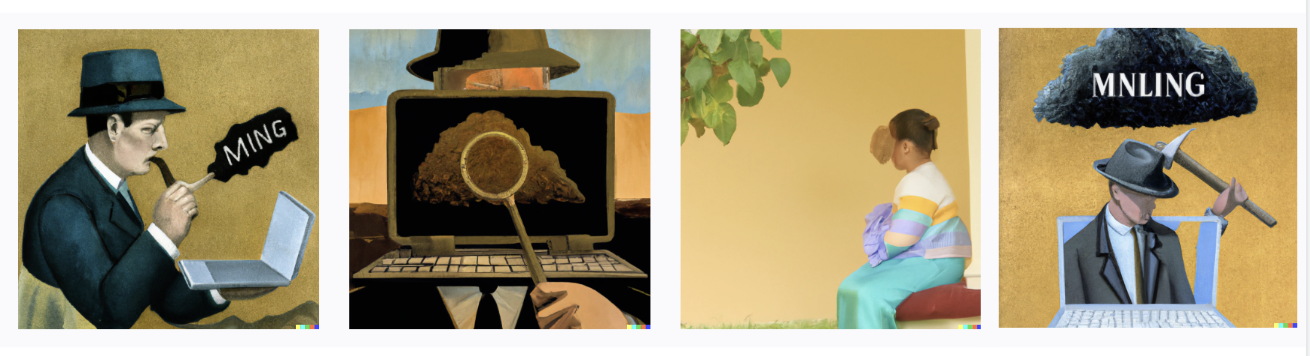

While brainstorming with the meetup team, we came up with the idea to use Magritte to style our logo. René Magritte is a famous Belgian impressionist who spent his childhood near Charleroi, so what better artist to give his style to our meetup’s logo?

Again, the results are astounding. You immediately recognise the style of Magritte, the coal and the data mining.

Similarities and differences

Of course I was also very curious about how Midjourney and Stable diffusion would fare in a similar setup.

I'm not gonna do another detailed description of the tests conducted. I can positively conclude that the quality of images and the creativity that these tools demonstrate is similarly impressive as with DALL-E.

The same prompt was submitted to Midjourney.

And to stable diffusion

Actually, Fabian Stelzer, who describes himself as a 'prompt intern' has done an extensive comparison of the three tools, if you are interested to read more about the differences between the tools.

If there are people calling themselves 'prompt intern', I'm suspecting there will be soon new opportunities and jobs related to mastering these tools. I consider myself not a pro and I've always been quite bad dealing with artistic assignments. I'm more the pragmatic and efficient type of person, so go check what great art other people have been creating and get inspired to style your creations and check the below tips to create better prompts.

While playing with the tools, and as you can imagine, you can spend quite some time creating images, experimenting with the features, etc. My mind was wandering around and I was asking myself a lot of questions, which I'm gonna try to summarise and answer below.

How does it work (in a nutshell)?

First question that came to mind is how does it actually work? How is it possible that with a simple sentence, a picture gets generated?

DALL-E 2 is actually a totally new model compared to DALL-E 1. DALL-E 2 is a diffusion model (GLIDE), it produces images and can also edit them, based on text prompts. It actually takes a noisy image as input, and with the guidance of the text, removes noise multiple times (150). When given a totally new prompt, at inference, the model starts with complete noise and updates the image according to the process learnt. You can actually see it in the below gif.

Previous work already used diffusion models to generate images of a certain category, based on random noise. Now with the text prompt, it went one step further. Instead of creating an image from a category, it creates a photo-realistic representation of the text, following the same principles.

Thus, after collecting millions of image-text pairs, they incrementally added noise to the picture and produced 150 version of the original pair. The model was trained to remove a bit of noise at each step, based on the text input, until it recreated the initial picture. To boost the performance of the model, the model first removes the noise, without the text and another time with the text. It then compares both versions of the images and uses the difference to remove the noise. Similarly, for image edition, only the part that needs to be edited is noised out and the same exact process follows.

We will not further go into the details in this article but you can read the follow post if you are interested in a deep dive.

Black boxes, explainable AI and ethics

Once I understood the inner workings of the model, and as much as I appreciate an elegant solution, my trail of thoughts continued and other questions came to mind. What can these tools do? How can we make sure that we do not reproduce biases? To which extent do we still control what they generate?

Indeed, not only are these models what we call black boxes, as it it impossible to understand how their decisions are made, they also have been using billions of images to train the models.

As with any machine learning model, there is a risk to reproduce or produce biases or inappropriate content. Bias usually comes from the way the training set is created or selected. Under-representation of some classes can cause bias in the outcome. For example, if in the dataset, there are mainly images of men working in mines, then most likely, when asked about a picture of miners, the model will output only men, continuing the vicious circle and spreading existing biases.

Since in the case of the diffusion models, there has been billions of images used to create the model, it is very difficult to understand and check the data distribution and potential biases that certainly are present in the data.

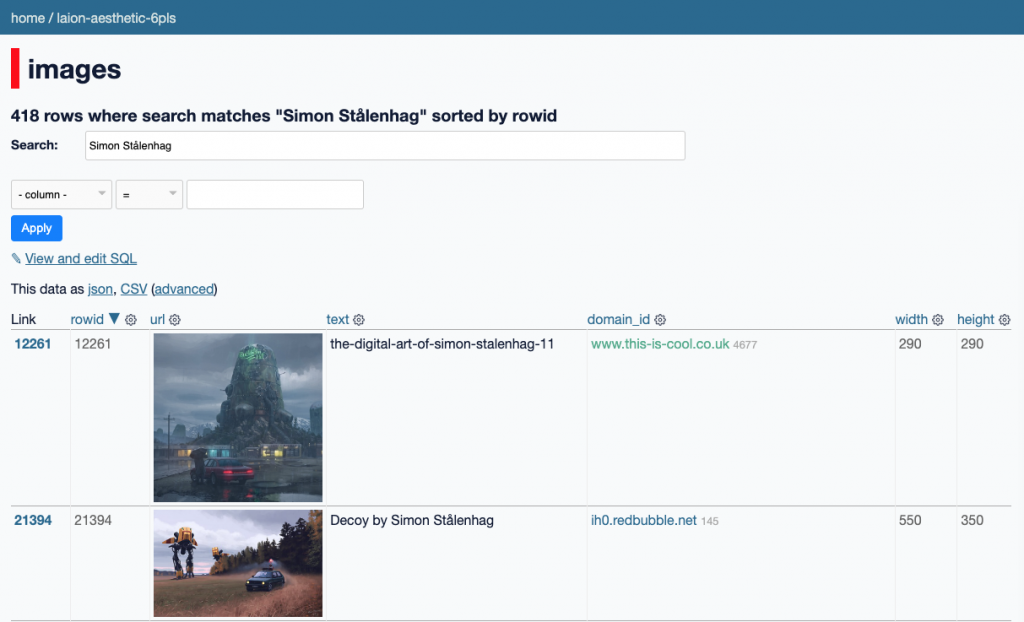

Even with these very cool initiatives that enable you to browse through part of the data used to train stable diffusion, it's not easy to estimate how much bias is included in the training set of the diffusion models.

So how can we make sure that the content does not contain biases? One technique used to detect and control for bias is to look at the important factors that influenced the model's results. Unfortunately, the high complexity of the model (so many parameters and connections) makes it very difficult to explain. Of course, even if the model is not fully explainable, as per a human definition, there has been a lot of advancement in the field of explainable AI. There are now tools like Microscope which enable you to partially understand when certain neurons are activated, to which type of content this is linked, and inappropriate usage of the tool can partially be prevented.

This entails implementing these controls as part of the model pipeline, which means you need to control the process. For example, Open AI explicitly blocks prompts being NFSW (Not Safe for Work) and by default Stable Diffusion rickrolls images generated with a high NFSW score (even if it’s easily bypassed when run locally).

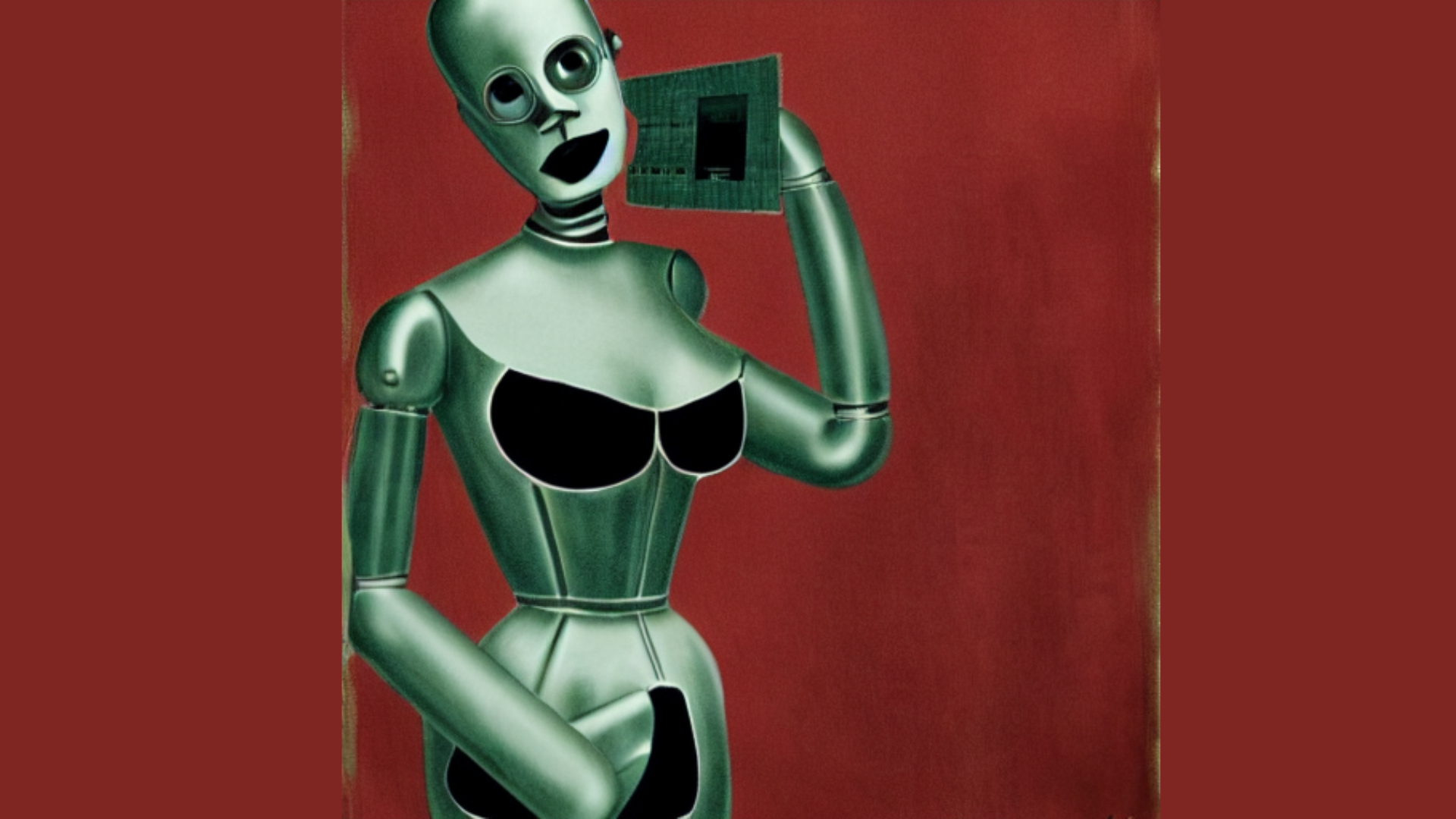

But when models are open sourced and can be used by anyone, we already see some variants, with questionable intent.

Diffusion and image generation models are very powerful tools, but are difficult to fully understand, monitor and quite easy to misuse. Which raises other types of questions on ownership and accountability. Do we need to give permits of use? Do we need to only allow the big players to distribute these models?

Fake news, copyrights and winning contests AI

While dreaming about the possibilities that the diffusion models unlock, I wondered whether we will ever be able to distinguish a picture generated by AI from a picture generated by a human? Even more interesting is the definition of art and artist. Have you heard that recently an AI won an art contest?

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer_public/91/31/91312024-89f0-46c8-91a8-b9345fd64a92/screen_shot_2022-09-02_at_23648_pm.png)

As you can imagine, the AI winning the award was followed by a heated debate about the definition of art and AI. This is actually not the first time that an AI is winning a contest, think about Deep Blue beating Kasparov, Alpha Go becoming the top Go champion. This was very different, the rules were clear and the AI was just using mathematics to beat a human, using mathematical strategies, it did generate more awe than polemic. But now things are different, we are seeing more and more AI capable of Creation; an attribute that we had linked with the human condition. Underneath, it's still maths, maths that creates art. Painters, artists, architects, etc already use a lot of maths, to calculate harmony, aesthetics, etc (think golden ratio and Da Vinci, a master of arts, physics and much more). Still, in our society, a way to define humans from animals is their capabilities to create and appreciate art. With the diffusion models, the process of creating art is no longer restricted to humans. We see more and more AI capable to generate astonishing art and it becomes very difficult to distinguish art generated by a machine from art generated by a human.

I see a future where most art is generated using AI, and novices who have great creativity but little drawing skill will be able to participate. Andrew NG in the Batch

It actually raises multiple ethical and legal questions about whether an AI can be entitled to copyrights. Can an AI generate art or other inventions and get a patent or win a contest?

While we are talking about copyrights, often times, the permission of the owners of the data was not asked. Thus diffusion models were trained on data which was not necessarily acquired in a lawful way. So if you have enough computation power, storage and can scrape millions of images from the internet, does that entitle you to create an AI that will win art contests? Who is the winner of the contest? The AI, the owner of the AI, it is shared between the people that created the images used for training? There are still a lot of ethical and legal aspects that needs to be clarified and regulated.

What's next?

Image generation is not yet fully mature but certainly evolving very rapidly. We see it getting integrated in many art editors and tooling. We cannot predict the future of these models, yet, they are definitely great tools and probably will give an edge to artists that use them rather than artists that don't. Only the future can tell ..