By Xuyao Zhang

At dataroots, we use Notion databases to keep track of our project portfolio and ongoing initiatives etc. Ricardo has implemented a NotionAPI to validate data in Notion database. This blog elaborates on a way to automate this process in Azure.

There are many ways to design an automation pipeline to run data quality checks, which must include these components: version control (e.g. GitHub, Azure DevOps), CI/CD platform (e.g. GitHub Actions, Azure Pipelines), scheduler (e.g. Cron, Airflow, Prefect), a machine or agent to run the program and a place (either locally or on the cloud) to store validation results.

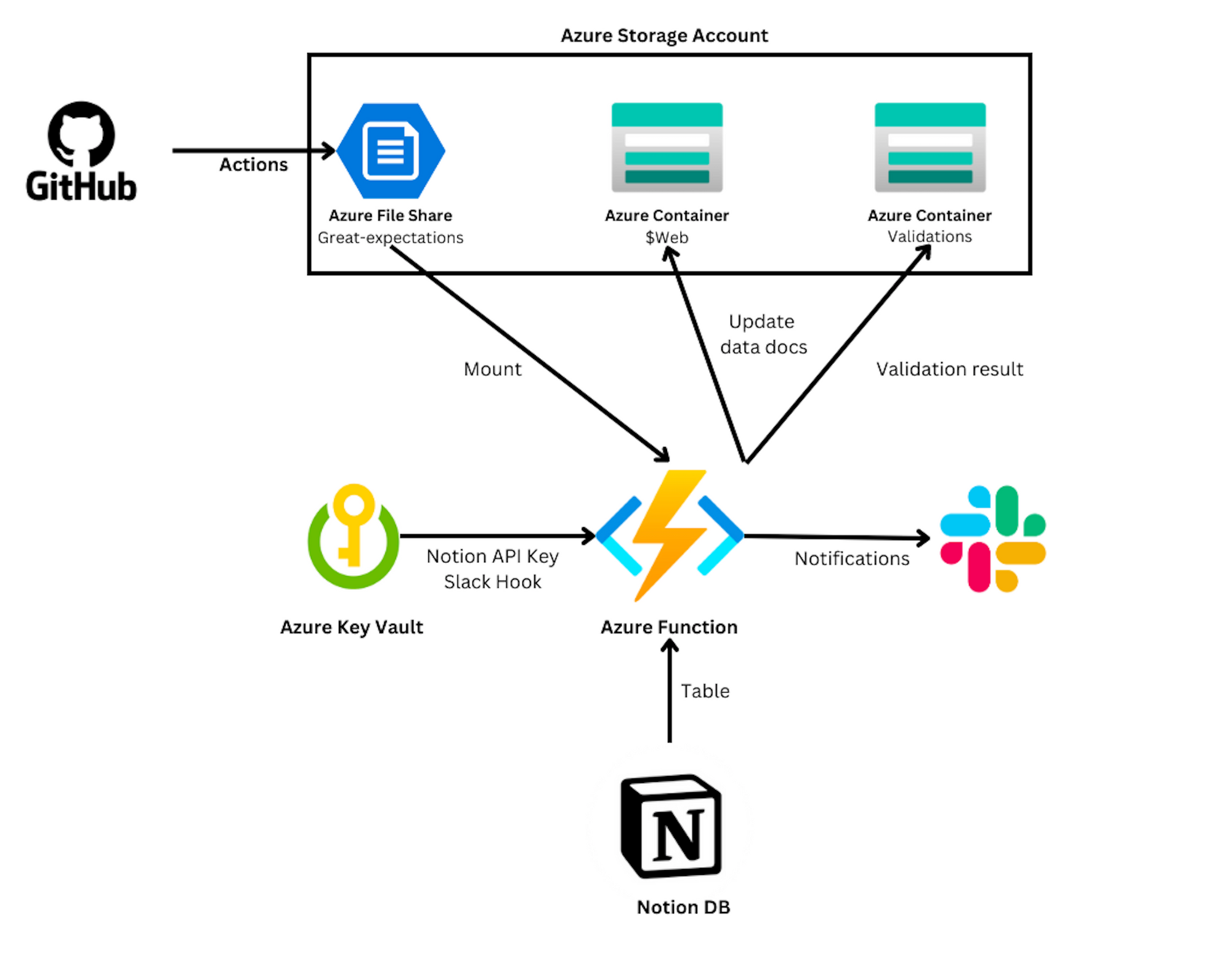

In our design, we implemented a streamlined architecture (see below) by using GitHub actions and some Azure services, and we will introduce how it works.

Azure Functions

Azure Functions is a great candidate if you only need to run simple functions and do not want to care about the infrastructure management. In our case, we scheduled a Python (3.9) function. It does the following stuff:

- Firstly, it reads data from Notion database based on the Notion API key and database id as a pandas dataframe.

- Secondly, it creates a run time batch request for the pandas dataframe.

- Finally, it takes corresponding expectations and runs the checkpoint accordingly.

Azure Key Vault

We use Azure Key Vault to store sensitive information including the Notion API key (to fetch Notion data), Azure connection string to a storage account, and Slack web hook (to send validation results to Slack). These secrets are then configured as environment variables in an Azure Function.

Azure Storage Account

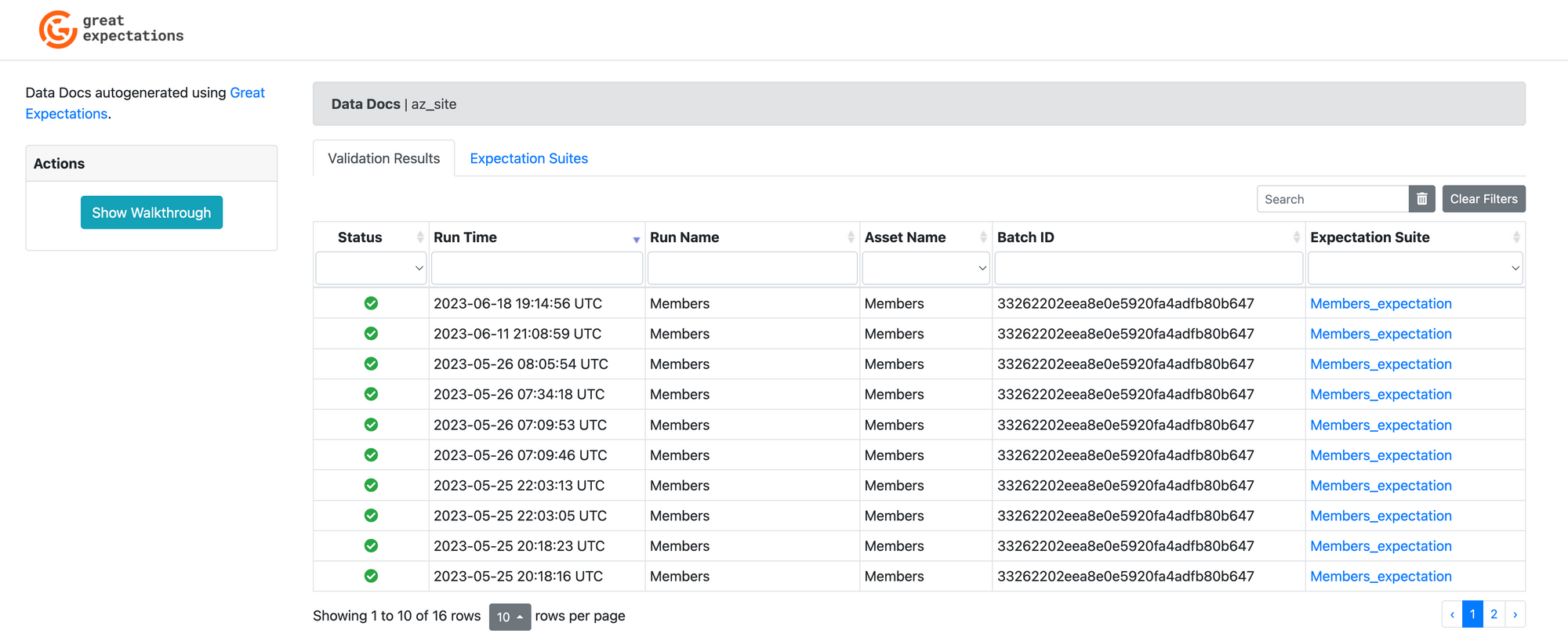

We created 3 Azure storage services: 1 Azure file share and 2 Azure blob storage. The file share is used to store the Great Expectations project, by mounting it on the Azure Function, the Function could recognise the context and perform relevant actions. In the configuration, the expectations are stored in the file share, while the data docs and validations are stored in another blob storage. In one of the blobs, the static website is enabled so that people can have a web interface for validation results (see the screenshot below). The action of sending Slack notifications is also configured here, specifically in the checkpoint folder.

GitHub Actions

The automation of the build&manage procedure is done through a CI/CD pipeline in GitHub. To simplify, only 3 steps were performed:

- Login to Azure

- Checkout source code

- Deploy the great expectations folder to the file share

In this way, the data context is separated with the validation function. It is good as we frequently work on adding/updating expectations and seldom modify the validation function. It would be nice to have another workflow to deploy the entire infrastructure to Azure in the future though.

Further improvement

In this article, we described a solution to implement an Azure infrastructure to run Great expectations on Notion database. There are several things to be improved in the future:

- Another Github workflow to be created to deploy the whole infrasturcture

- Investigate how to better visualise the data docs without keeping all validation results in Azure storage

- Probably a customised web interfact to check the validation results

References